| posted 1 September 2003 |

|

COPY-AND-PASTE CITATION William H. Calvin, A Brief History of the Mind (Oxford University Press 2004), chapter 7. See also http://WilliamCalvin.com/BHM/ch7.htm |

William H. Calvin |

|

x Cliff overhanging modern buildings, Les Eyzies de Tayac, France

Cro-Magnon rock shelter at base of overhang, Les Eyzies

Behaviorally modern Homo sapiens sapiens and their tools from about 28,000 years ago were initially found here in 1868. Similar rock shelters in the area subsequently yielded more ancient Neanderthals and flake toolmaking. 7 Homo sapiens without the Modern Mind The big brain but not much to show for it

If the ape-to-human saga were a movie, we’d appear only in the final few minutes – and a silent movie might have sufficed. By the middle of the previous ice age, about 200,000 to 150,000 years ago, the DNA dating suggests that there were less heavily built people around Africa who looked a lot like us, big brains and all. And now there are some skulls from Ethiopia dated at 160,000 years ago. If they did have language but hadn’t yet made it past the short sentence stage, a silent movie might still suffice because that kind of language employs a lot of redundancy. You can often guess what’s being said from the situation, the gestures, the facial expressions, and the postures. But long sentences involving syntax would surely need the soundtrack – unless, of course, structured sign language came before spoken language, always a possibility. (It’s still not clear when vocalizations became such a dominant medium for the longer communications.) But what’s new with modern-looking Homo sapiens? That news is so minor that it gets lost in the aforementioned dramas.

The Neanderthals came on the anthropological scene with the discovery in 1856 in the Neander valley (“tal” in the modern German spelling, “thal” in the former spelling) near Düsseldorf. The first modern human skeletons to be recognized as undoubtedly ancient were discovered in France in 1868, surely contributing to the French sense of refinement over the Germans. (English pride erupted in 1912 with the discovery of Piltdown man, but it turned out to be an elaborate fraud, uncovered only in 1949 by the invention of the fluorine absorption dating method. But at least the English had Charles Darwin to brag about.) The “Cro-Magnons” came from a rock shelter in the Dordogne valley that was being excavated as part of the construction of a railroad. The rock shelter is nestled, like much of the town of Les Eyzies de Tayac, under the overhang of a towering limestone cliff. It can be visited today at the rear of the Hotel Cro-Magnon. The bones came from a layer, about 28,000 years old, that also yielded the bones of lions, mammoths, and reindeer. Tools were also found there in somewhat deeper layers, made in an early behaviorally modern style that archaeologists call the Aurignacian, associated with an advanced culture known for its sophisticated rock-art paintings and finely crafted tools of antler, bone, and ivory. Many other early modern humans have been found since, mostly in Europe – but the oldest ones are from Africa and Israel at dates well before behaviorally modern tools and art appear on the scene. That’s where the modern distinction comes from, of anatomically modern (Homo sapiens) and then behaviorally modern in addition (sometimes called Homo sapiens sapiens, though probably not doubly wise so much as considerably more creative). The distinction between Homo sapiens and the earlier Neanderthals is based on looks, not brain size or behavior. So what makes a skull look modern? The sapiens forehead is often vertical, compared to Neanderthals or what came before, and the sides of the head are often vertical as well (in many earlier skulls, the right and left sides are convex). Think of our modern heads as slab-sided, like one of those pop-up tops that allows someone to stand up inside the vehicle when camping. But the back of the modern head is relatively rounded and has lost the ridge that big neck muscle insertions tend to produce in more heavily built species. Though some moderns have brow ridges up front, most do not. The face below the eyes is also relatively flat, nestling beneath the braincase, and later there is often a protruding chin. (Both likely represent a reduction in the bone needed to support the teeth, another sign that food preparation had gotten a lot better.) The sapiens skeleton below the neck is less robustly built as well. Neanderthals, while often tall, had shorter forearms and lower legs, and virtually no waist because the rib cage is larger and more bell shaped. Their bones were often thicker, even in childhood when heavy use isn’t an issue. Overall, the impression is that we moderns are much more lightly built than the Neanderthals, even when the same height, much as normal cars and “Neanderthal” SUVs can still fit the same parking space. Apparently we didn’t have to be as tough – and it wasn’t because the climate was better. If anything, the climate was worse, abruptly flipping back and forth in addition to the slower ice age rollercoaster. So something was starting to substitute for brawn. Neanderthals were living longer than Homo erectus did; the proportion of individuals over 30 years old to those of young adults grew somewhat at each step from Australopithecine to erectus to Neanderthal; the big step in middle-age survival was when modern humans came on the scene. In an age before writing, older adults were the major source of information about what had happened before. Was this just techniques and memory for places, or was language augmenting this potential for expanding culture? The paleoanthropologist Ian Tattersall thinks that Neanderthals had "an essentially symbol-free culture," lacking the cognitive ability to reduce the world around them to symbols expressed in words and art. Perhaps not even words, the protolanguage that I speculated about back at the 750,000-year chapter. One could make the same argument for the anatomically modern Homo sapiens in Africa. A lot still needs sorting out, but I’d emphasize that cognition isn’t just sensory symbols; it’s also movement planning, and that shows up in different ways, like those javelins in the common ancestor, 400,000 years ago.

Throwing is only one of the ballistic movements and you might wonder if hammering, clubbing, kicking and spitting have a similar problem to solve. But they are mostly set pieces like dart throws, not needing a novel plan except for the slow positioning that precedes the action. None has the high angular velocity of the elbow and wrist that complicates timing things. With wide launch windows, it isn’t such a difficult task for the brain during “get set.” So I’d bet on throwing as the “get-set” task that could really drive brain evolution – particularly because it is associated with such an immediate payoff when done right. When did spare-time uses develop for the movement planning neural machinery for throwing? Hammering was likely the earliest, though I wouldn’t push cause and effect too far here. With shared machinery, you can have coevolution with synergies: better throwing might improve, in passing, the ability to hammer accurately. And vice versa. When did the multistage planning abilities get used on a different time scale, say the hours-to-days time scale of an agenda that you keep in mind and revisit, to monitor progress and revise? That’s harder but many people would note that an ability to live in the temperate midlatitudes requires getting through the months called wintertime when most plants are dormant and shelter is essential. Absent food storage, it suggests they were already able to eat meat all winter. Clearly Homo erectus managed all this in the Caucasus Mountains, at the same latitude and continental climate as Chicago, by 1.7 million years ago – so most of the wintertime arguments we make about hominids are applicable to much earlier dates than Homo sapiens. When did secondary use spread from hand-arm movements to oral-facial ones? Or to making coherent combinations of more symbolic stuff, not just movement commands? One candidate for both is at 50,000 years ago, as that’s when behaviorally modern capabilities seem to have kicked in and launched people like us out of Africa and around the world.

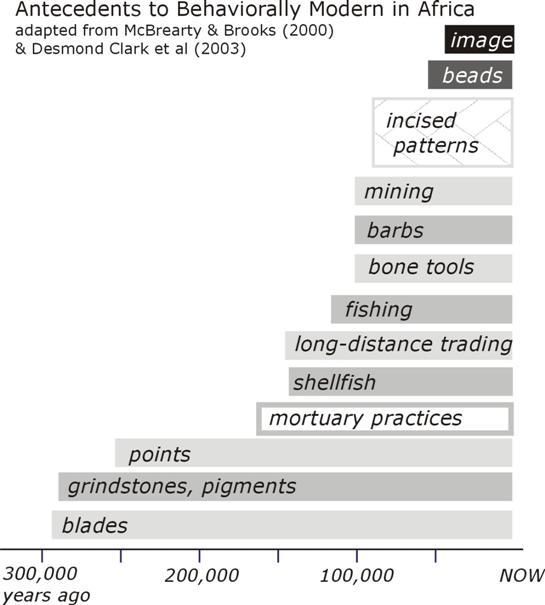

What were the early moderns doing that was different from what came before? Is there a gradual ramp up to the behaviorally modern efflorescence? Or was there 100,000 years of “more of the same” until the transition at 50,000 years ago? Again, that’s one of the things that used to be more obvious than it now appears. Most of the archaeology came initially from sites in Europe and owed a lot (as it still does) to mining, railroad construction, and enough educated people in small towns (typically clergy and physicians, plus the visiting engineers) to take an interest in what some laborer found and report it to a relevant professor in a larger city, who might then come and investigate. One of the early distinctions was between the Levallois “flake” technology and a finer “blade” technology. The flakes were seen at older dates and sometimes in association with bones of Neanderthals. The blades were found mostly in the more recent layers, from after Cro-Magnon humans had arrived. So blades became to be seen as part of the behaviorally modern suite of traits. People who liked to arrange things in ladders saw the older Neanderthals as producing tools that were less refined than those of the more modern types of hominid. It sounded sensible but, thanks to a lot of hard work by the archaeologists, this hypothesis has been breaking down, as I mentioned earlier. Science is like that. Propose an explanation, and other scientists will take it as a challenge. Some scientists will even undertake to disprove their own hypothesis. (You want to find the flaws yourself, and go on to find a better explanation, before others do it for you.) A more careful look at the European sites showed that they don’t reliably segregate into a Neanderthals-and-flakes cluster versus a moderns-with-blades cluster. Worse, the blade technology has been found in Africa at about 280,000 years ago, which is back even before anatomically modern humans were on the scene. So behaviorally modern Africans might have brought the blade technology along with them when they emigrated to Europe, but the Neanderthals seem to have known a good thing when they saw it. Also, blades can be seen as just a refinement of the staged toolmaking needed for flakes, not another big step up in toolmaking technology. Instead of preparing a core to have the tentlike sloping sides meeting at a ridgeline, what produces the triangular shape of the flake, the core for a blade tends to have one end flattened, so that there is a nice right-angle cliff. Like jumping on the edge of a cliff (what boys sometimes try on a dare, jumping to safety as the leading edge collapses), the toolmaker uses a pusher piece (say, a small bone) to stand atop the core’s “cliff edge.” A tap on this punch with a rock hammer will then shear off a blade.

Obsidian core and blades, National Museum of Kenya, Nairobi Using a punch ensured that the pressure focused on a narrow edge. This technique allowed a thin blade to be sheared off – but it is not uniformly thick. Instead it often thins down to one sharp edge. It can be grasped in the manner that we would hold a single-edged razor blade. Or it can be mounted in a handle, perhaps held in a split stick. Because a whole series of thin blades could be sheared off from the prepared core, it was quite efficient in conserving raw material. Other things were changing in this period, but it is hard to tell because Homo sapiens population densities were so low in Africa. They were spread even thinner than historic hunter-gatherers. In southern Africa, they were eating eland, a large antelope adapted to dry country, quite regularly – but they were not often eating the more dangerous prey like pigs and cape buffalo, which can be quite aggressive toward hunters. They generally didn’t fish. They didn’t transport stone over long distances, even though making blades. When they buried their dead, there were no grave goods or evidences of ceremony. Campsites did begin to show some evidence of organization. So this isn’t just toolmaking. Anatomically modern Homo sapiens of Africa were not conspicuously successful like the behaviorally modern Africans that followed. They certainly didn’t leave much evidence for a life of the mind. Perhaps archaeologists will dig in the right place and change this view, but a half-century of testing the hypothesis – that the bloom in creativity came long after Homo sapiens was on the scene – has led to the view that mere anatomical modernity was not the big step. The archaeologist Richard Klein summarizes the data this way:

[The] people who lived in Africa between 130,000 and 50,000 years ago may have been modern or near-modern in form, but they were behaviorally similar to the [European] Neanderthals. Like the Neanderthals, they commonly struck stone flakes or flake-blades (elongated flakes) from cores they had carefully prepared in advance; they often collected naturally occurring pigments, perhaps because they were attracted by the colors; they apparently built fires at will; they buried their dead, at least on occasion; and they routinely acquired large mammals as food. In these respects and perhaps others, they may have been advanced over their predecessors. Yet, in common with both earlier people and their Neanderthal contemporaries, they manufactured a relatively small range of recognizable stone tool types; their artifact assemblages varied remarkably little through time and space (despite notable environmental variation); they obtained stone raw material mostly from local sources (suggesting relatively small home ranges or very simple social networks); they rarely if ever utilized bone, ivory, or shell to produce formal artifacts; they buried their dead without grave goods or any other compelling evidence for ritual or ceremony; they left little or no evidence for structures or for any other formal modification of their campsites; they were relatively ineffective hunter-gatherers who lacked, for example, the ability to fish; their populations were apparently very sparse, even by historic hunter-gatherer standards; and they left no compelling evidence for art or decoration. So it’s not just Neanderthals – people who looked like us were not exhibiting much in the way of what we associate with a versatile intelligence.

Clearly, having a big brain is not sufficient to produce spectacular results. It must have taken something more. The Neanderthal brain is actually somewhat bigger on average than the early modern brain (though, when you correct for the larger Neanderthal body, the difference seems minor). But that’s just the final example of why bigger brains aren’t necessarily smarter. Brain size earlier increased over many periods when there wasn’t much progress in toolmaking. Whatever was driving brain size must have been something that didn’t leave durable evidence much of the time – say, social intelligence – perhaps even something that didn’t generally improve intelligence.

For example, when you approach grazing animals, you can get a lot closer if the animals haven’t been spooked recently by a lot of previous hunters. There is a version of this in evolutionary time too, as the hunted animals that spook easily are the ones that reproduce better. Hundreds of generations later, the hunters have to be more skillful just to maintain the same yield. (When hominids made it out into Eurasia, the grazing animals there were surely naďve in comparison to African species, which had coevolved with hunting hominids.) Lengthening approach distances, as African herds evolved to become more wary since early erectus days, would have gradually required more accurate throws (just double your target distance and it will become about eight times more difficult). This is the Red Queen Principle, as when Alice was told that you had to run faster just to stay in the same place. The Red Queen may be the patron saint of the Homo lineage. Even if they had no ambition to get better and better, the increasing approach distances forced them to improve their technique. That’s just one example of things that, like protolanguage, are invisible to archaeology but that could have driven brain reorganization (and with it, perhaps brain size). So while the early moderns had a lot going for them, I suspect that it didn’t yet include a very versatile mental life. They may have had a lot of words, but not an ability to think long complicated thoughts. Still, they might have been able to think simple thoughts at one remove.

Understanding another as an animate being, able to make things happen, is not the same thing as ascribing thoughts and knowledge to that individual. Babies can distinguish animate beings from inanimate objects quite early, but it is much later, usually after several years’ experience with language, that they begin to get the idea that others have a different knowledge base than they have. A simple way of testing this with a child is to use a doll named “Sally.” Both the child and Sally observe a banana being placed inside one of two covered boxes. Then Sally “leaves the room” and the experimenter, with the child watching, moves the banana to the other box and replaces the lids. Now Sally “returns to the room.” The child is asked where Sally is going to look for the banana. A three-year-old child is likely to say that Sally will look in the box where the banana currently is. A five-year-old will recognize that Sally doesn’t know about the banana being moved in her absence and that she will therefore look in the original box. Being able to distinguish between your own knowledge and that of another takes time to develop in childhood. Still, even chimpanzees have the rudiments of a “theory of mind,” which is what we call this advanced ability to understand others as having intentions, goals, strategies, attention that can be directed, and their own knowledge base. Being able to put yourself in someone else’s shoes, one setup for empathy, is not an all-or-nothing skill. Though this “theory of [another’s] mind” has an aspect of operating at one remove – and thus of having some semblance of structured thought – it may have evolved before the structured suite that I will discuss in the next chapter at some length.

Mirror neurons are in style as a candidate for the transition but, while it is exciting stuff, I suspect that “see one, do one” imitation wasn’t the main thing. Mirroring is the tendency of two people in conversation to mimic one another’s postures and gestures after some delay. Cough, touch your hair, or cross your legs and your friend may do the same in the next minute or so. Many such movements are socially contagious but they are usually standard gestures, lacking in novelty. While mirroring reminds most people of imitation, imitation actually suggests something more novel than triggering an item of your standard repertoire of gestures. But it does represent a widespread notion that observation can be readily translated into movement commands for action (“see one, do one”) and that’s what lies at the heart of the neurobiologists’ excitement over this. It’s a neat idea, though there are some beginners’ mistakes to avoid. For one thing, “monkey see, monkey do” is not as common in monkeys as the adage suggests. Not even in chimpanzees does it work well. Michael Tomasello and his colleagues removed a young chimpanzee from a play group and taught her two different arbitrary signals by means of which she obtained desired food from a human. “When she was then returned to the group and used these same gestures to obtain food from a human, there was not one instance of another individual reproducing either of the new gestures – even though all of the other individuals were observing the gesturer and highly motivated for the food.” Chimps do not engage in much teaching, either. No one points, shows them things, or engages in similar nonlanguage ways that humans interact with children. There isn’t much instructive joint attention, outside of modern humans.

If the brain is going to imitate the actions of another, it will need somehow to convert a visual analysis into a movement sequence of its own. Perhaps it will have some neurons that become active both when performing an action – say, scooping a raisin out of a hole – and when merely observing someone else do it, but not moving. Such neurons have indeed been found in the monkey’s brain, and in an area of frontal lobe that, in humans, is also used for language tasks. Yet it is easy to jump to conclusions here if you aren’t actually engaged in the research. These “mirror neurons” need not be involved in imitation (the sequence of “see one, do one”) nor even involved in planning the action. Every time someone mentions mirror neurons as part of a neural circuitry for “see one, do one” mimicry, the researchers will patiently point out that what they observe could equally be neurons involved in simply understanding what’s going on. Just as in language, where we know that there is a big difference in difficulty between passively understanding a sentence and being able to actively construct and pronounce such a sentence, so the observed mirror neurons might just be in the understanding business rather than the movement mimicry business. But the videos of mirror neurons firing away when the monkey is simply watching another monkey, and in the same way as when the monkey himself makes the movements, have fired the imaginations of brain researchers looking for a way to relate sensory stuff with movement planning and inspired experiments designed to tease apart the possibilities. It’s an exciting prospect for language because of the motor theory of speech understanding, put forward by Alvin Liberman back in 1967. Elaborated by others in the last few decades, it claims that tuning in to a novel speech sound is, in part, a movement task. That’s because categorizing certain sounds (“hearing” them) may involve subvocal attempts to create the same sound. The recognition categories seem related to the class of movement that it would take to mimic it. More and better imitation, perhaps enabled by more “mirror neurons,” might be involved in the cultural spread of communicative gestures and perhaps even vocalizations. Humans are extraordinary mimics, compared to monkeys and apes, and it surely helps in the cultural spread of language and toolmaking. So did we get a lot more mirror neurons at the transition? Or did an already augmented human mirroring system merely help spread another biological or cultural invention in a profound way, much as it might have spread around toolmaking, food preparation, and protolanguage?

Mirroring had likely been aiding in the gradual development of novel vocalizations and words for some time before the transition to behaviorally modern. Anything that helped humans more rapidly to acquire new words could have sped up the onset of widespread language. But you don’t want to confuse a mechanism that amplifies the effect of the real thing with, well, the real thing itself.

Framing is only protostructure and it is a commonplace of perception, shared by the other animals and surely by Homo sapiens back before the transition. The surroundings make a big difference. The spots are the same shade of gray, but the square black surround lightens our perception of the surrounded spot. In the moon illusion, the moon looks a lot larger when framed near the horizon.

Similar effects happen in cognition, where we call it “context.” You interpret the word lead quite differently depending on whether the context is a pencil, a leash, a heavy metal, a horse race, or a journalist’s first paragraph. In movement planning – the other half of cognition but often ignored by people who write on the subject – the reports of the present position of your body serve to frame the plan, as does the space around you (say, your target if hammering or clubbing). The tentative movement plan itself frames the next round of perception. We frame things in sequence, as in our anthropological preoccupation with what comes first and what follows. And when we get to language, some of those closed-class words such as left, below, behind, before serve to query for elements of the frame in space and time. Framing continues to be a major player at higher cognitive levels, as we will see in the creativity chapter.

The big step up might involve the evolutionary equivalent of tacking on another of Piaget’s developmental stages seen in children. Still another formulation has to do with augmenting “primary process” in our mental lives. The notion is that it got an addition called “secondary process.” The distinction was one of Freud’s enduring insights, though much modified in a century’s time. Primary process connotations include simple perception and a sense of timelessness. You also easily conflate ideas, and may not be able to keep track of the circumstances where you learned something. Primary process stuff is illogical and not particularly symbolic. You consequently engage in displacement behaviors, as in kicking the dog when frustrated by something else. There is a lot of automatic, immediate evaluation of people, objects, and events in one’s life, occurring without intention or awareness, having strong effects on one’s decisions, and driven by the immediacy of here-and-now needs. While one occasionally sees an adult who largely functions in primary process, it’s more characteristic of the young child who hasn’t yet learned that others have a different point of view and different knowledge. Secondary process is now seen as layered atop primary process, and is much more symbolic. Secondary stuff involves symbolic representations of an experience, not merely the experience itself. It is capable of being logical, even occasionally achieves it. You can have extensive shared reference, the intersubjectivity that makes institutions (and political parties) possible. Modern neuropsychological tests of frontal lobe function usually look at an individual’s ability to change behavioral “set” in midstream. For example, the person is asked to sort a deck of cards into two piles, with the experimenter saying yes or no after each card is laid down. Soon the person gets the idea that black cards go in the left pile, red suits in the right pile. But halfway through the deck, the experimenter changes strategy and says yes only after face cards are placed in the left pile, numbered ones in the right pile. Normal people catch on to the new strategy within a few cards and thereafter get a monotonous string of affirmations. But people with impaired frontal lobe function may fail to switch strategies, sticking to the formerly correct strategy for a long time. This flexibility may be part of the modern suite of behaviors, or it might come a bit earlier.

People who have only protolanguage, not syntax, provide some insight about the premodern condition. What might it have been like, to be anatomically modern, with a sizeable vocabulary – but with no syntax, no ability to quickly generate structured thoughts and winnow the nonsense from them? The feral children and the locked-in-a-closet abused children are possible models, but they generally have a lot wrong with them by the time they are studied for signs of higher intellectual functions. A better example is the deaf child of hearing parents where everything else about the child’s rearing and health is usually normal except for the lack of exposure to a structured language during the critical preschool years. (Deaf children exposed to a structured sign language in their early years via deaf parents or in deaf childcare will pick up its syntax just as readily as hearing children infer syntax from speech patterns.) The average age at which congenital deafness is diagnosed in the United States is when the child is three years old – which means that some aren’t tested until they start school. Certainly, one of life’s major tragedies occurs when a deaf child is not recognized as being deaf until well after the major windows of opportunity for softwiring the brain in early childhood have closed. Oliver Sacks’ description of an eleven-year-old deaf boy, reared without sign language for his first ten years because they mistakenly thought he was mentally retarded, shows what mental life is like when you can’t structure things:

Joseph saw, distinguished, categorized, used; he had no problems with perceptual categorization or generalization, but he could not, it seemed, go much beyond this, hold abstract ideas in mind, reflect, play, plan. He seemed completely literal — unable to juggle images or hypotheses or possibilities, unable to enter an imaginative or figurative realm.... He seemed, like an animal, or an infant, to be stuck in the present, to be confined to literal and immediate perception.

That’s what lack of opportunity to tune up to the structural aspects of language can do. So a child born both deaf and blind has little opportunity to softwire a brain capable of structured consciousness. (But what about Helen Keller and her rich inner life? Fortunately she wasn’t born blind and deaf, as the usual story has it. She probably had 19 months of normal exposure to language before being stricken by meningitis. By 18 months, some children are starting to express structured sentences, showing that they had been understanding them even earlier. Helen Keller probably softwired her brain for structured stuff like syntax before losing her sight and sound.) So the premodern mind might well have been something like Joseph’s, able to handle a few words at a time but not future or past tense, not long nested sentences. A premodern likely had thought, in Freud’s sense of trial action. But without structuring, you cannot create sentences of any length or complexity – and you likely cannot think such thoughts, either. Imagine people like Joseph but speaking short sentences instead of signing them – yet having no higher cognitive functions, “unable to juggle images or hypotheses or possibilities, unable to enter an imaginative or figurative realm.” That’s what Homo sapiens might have been like before they somehow invented behavioral modernity.

We get tremory – Joanne Sydney Lessner and Joshua Rosenblum, from the libretto of “Einstein’s Dreams”

Alfred Russel Wallace figured out natural selection in 1858 on his own (except for several close friends, Darwin had kept quiet about it after he figured it out in 1838). But Wallace later had a great deal of trouble with whether humans could be explained by the evolutionary process as it was then understood. He couldn’t understand how physiology and anatomy were ever going to explain consciousness. (Some progress has finally been made in recent years.) And he said that a “savage” (and Wallace had a high opinion of them) possessed a brain apparently far too highly developed for his immediate needs, but essential for advanced civilization and moral advancement. Since evolution proceeds on immediate utility and the savage’s way of life was the way of our ancestors, how could that be? It sounds like preparation, evolution “thinking ahead” in a way that known evolutionary mechanisms cannot do. (Still a troublesome question; the next chapter will tackle it.) They are both excellent observations, ones that have troubled many scientists and philosophers since Wallace stated them so well. Wallace went on to conclude, however, that some “overruling intelligence” had guided evolution in the case of humans. “I differ grievously from you,” Darwin wrote to Wallace in April 1869, “and I am very sorry for it.” Darwin, like most neuroscientists and evolutionary scientists today, would rather leave the question open than answer it in Wallace’s manner. “I can see no necessity for calling in an additional … cause in regard to Man,” Darwin wrote to Wallace. A mystery, Daniel Dennett reminds us in his book Consciousness Explained, “is a phenomenon that people don't know how to think about – yet.” To the people who actually work on Wallace’s two problems, they are no longer the mystery they once were. We have great hopes of eventually proving Ambrose Bierce wrong:

Mind, n. A mysterious form of matter secreted by the brain. Its chief activity consists in the endeavor to ascertain its own nature, the futility of the attempt being due to the fact that it has nothing but itself to know itself with. –The Devil's Dictionary

I say this, recognizing that no one person’s mind can do the job of understanding mind and brain. But collectively and over time, science manages amazing feats. We may not know all the answers about how the modern mind emerged from the ancestral mentality, but it looks as if it can be understood without calling in mysterious forces.

We human beings, unlike all other species on the planet, are knowers. We are the only ones who have figured out what we are, and where we are, in this great universe. And we're even beginning to figure out how we got here. – Daniel C. Dennett, 2003

|

2000 The Cerebral Code 1996 How Brains Think 1996 Conversations with Neil's Brain 1994 The River That Flows Uphill 1986 The Throwing Madonna 1983 |

|

Table of

Contents

Notes and References for this chapter

On to the NEXT CHAPTER |

copyright ©2003 by William H. Calvin

| William H. Calvin |

You can easily make a symmetrical face look crooked. You can distort size

and shape, as is nicely shown in Roger Shepard’s “Turning the Tables”

illusion. The two tabletops are actually the same size and shape but the

legs and drop-shadows make all the difference in how you judge them. (Go

ahead, measure them. If that doesn’t convince you, make a cutout of a

tabletop and reposition it over the other one.)

You can easily make a symmetrical face look crooked. You can distort size

and shape, as is nicely shown in Roger Shepard’s “Turning the Tables”

illusion. The two tabletops are actually the same size and shape but the

legs and drop-shadows make all the difference in how you judge them. (Go

ahead, measure them. If that doesn’t convince you, make a cutout of a

tabletop and reposition it over the other one.)