|

Conversations with Neil’s Brain The Neural Nature of Thought & Language Copyright 1994 by William H. Calvin and George A. Ojemann. You may download this for personal reading but may not redistribute or archive without permission (exception: teachers should feel free to print out a chapter and photocopy it for students). William H. Calvin, Ph.D., is a neurophysiologist on the faculty of the Department of Psychiatry and Behavioral Sciences, University of Washington. George A. Ojemann, M.D., is a neurosurgeon and neurophysiologist on the faculty of the Department of Neurological Surgery, University of Washington. |

|

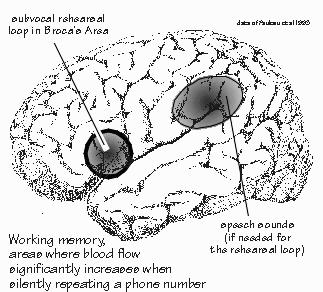

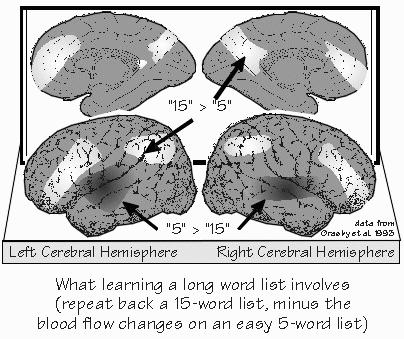

How Are Memories Made? NEIL WAS GOING INTO THE HOSPITAL for a few days for more diagnostic workup. George wanted round-the-clock monitoring of his EEG, needing to double-check that Neil’s seizures always began in his left temporal lobe — and not his left frontal lobe. We were sitting outside in a summer breeze. Neil wanted to get some sunshine while he still had the chance. The first thing to realize about human memory, I said, is that it doesn’t work like any known filing system, including computers and videotape. The second thing is to think process rather than place. “But I thought the hippocampus was the place?” Neil said. “Not so?” There are some important places, but they’re probably not where the information is really stored in the long run. And the places don’t tell you much about the mechanisms. It seems that the how of memory is a layer of mechanism deeper than the what and where. We backed into the topic by considering the size of the “buffers” and “RAM.” There are some interesting clues, just from the size limitations you encounter. The capacity of immediate memory is indicated by the title of the psychologist George Miller’s study of it in 1956: The magical number seven: plus or minus two. It’s working memory, where the subject gets to rehearse those seven items of information. “Like trying to remember a phone number without writing it down, holding onto it long enough to dial it,” Neil said. Broca’s area seems to play an important role in that kind of working memory, I explained. Some people can only hold onto five digits, some can manage nine, but the average is about seven. That’s how many we can hold in sequence long enough to repeat them back or dial a phone. Anything longer and — unless part of it is very familiar to us already — we have to use crutches. With perhaps 15 digits to place an international call, we have to do something, such as writing it down and then reading it back digit by digit. Without writing, the common mental trick is to subdivide the problem whenever approaching the seven-digit limit, a process that has become known as chunking.  [FIGURE 42 Working memory: subvocal rehearsal of phone numbers] Most of us can manage longer sequences this way: remembering the international-call access number separately (011), the international dialing code for the United Kingdom separately (44), then the code for Central London (71), and then the familiar prefix (338) at University College London, followed by the four-digit extension. That’s eight chunks total, rather than the fourteen separate units stored by the memory phone button. “So the limitation seems to be on the number of chunks rather than on the total bits of information.” Exactly. We make the individual chunk represent more, since packaging is everything. That’s a lot of what building your vocabulary is all about, making one word replace a longer, roundabout phrase. The linguist Philip Lieberman thinks that efficiencies like that were an important part of the evolution of language from the ape level to the human one, that this limitation on working memory otherwise limits one to saying very simple things.  [FIGURE 43 Cortical blood flow when learning a long word list] Suppose I were to read aloud a list of five names, and then ask you to repeat them back in any order. Nearly everyone can do this task. But when the list is fifteen words long, you’d be lucky to get seven or eight. During either chore, many areas of your brain would receive increased blood flow. By subtracting the map for the five-word list from the map for the fifteen-word list, you can get some idea of the brain areas that are involved in trying to chunk. The frontal lobe is busy on both sides of the brain, as are the posterior parietal areas that are ordinarily involved in visual-spatial tasks. And since the test is all verbal, done with the eyes closed, it suggests that many subjects were making some use of the traditional mnemonic techniques involving imagining a list, or “placing” the items in the rooms of a large mansion. If each word of a seven-word sentence stands for something very simple, like a digit, then the sentence itself cannot say much. But if each word stands for a whole concept and its many connotations, then a unique seven-word sentence can encompass much. Chunking creates new categories. Some are as temporary as that memory mansion. Other chunks get used often enough to become part of your private vocabulary. |

|

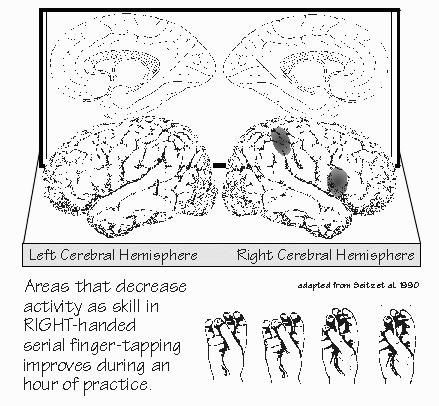

CONTINUOUS NEURONAL ACTIVITY has always been assumed to be the substrate of

working memory, what goes on during rehearsal. There isn’t much evidence one way or

the other for that assumption, although recording of neuron activity in monkeys has shown some

frontal lobe neurons that are continuously active between the time a monkey matches two objects

and the time the memory of the object is retrieved. Neurons like that have also been found in the temporal and the parietal cortex, with the temporal neurons active during the memory storage phase of visual cues such as colors, while the parietal neurons were active during storage of memory for sensory discriminations. So working memory may be part of the brain concerned with perception of the material to be remembered — but maybe it’s neurons further along in the postsensory areas. In the monkey studies, the neurons changing activity with perception were usually different from those active during memory storage. “Okay,” Neil said, “immediate memory is just keeping the activity going, the perception that noticed the event in the first place. But what happens if I distract you? Prevent you from rehearsing it?” There might be various scratch pads that you can shift among — but eventually you’re going to fill them up and have to overwrite. I doubt this will be as simple as the number of windows you can keep open on a computer screen. Overwriting itself is interesting, as another substrate for post-distractional memory would be lingering synaptic changes from the earlier immediate memory, such as those enhanced releases of neurotransmitter and enhanced postsynaptic responses. From that, you might be able to reconstitute the activity present during working memory. It’s possible to record individually from some of the neurons in the temporal lobe, prior to their surgical removal. We’ve done this by sneaking up on them with a sharpened needle whose tip is in electrical contact with the tissue. As we get close to a neuron, its impulses can be heard through the loudspeaker. “That’s what you mean by `wiretapping’ a cell?” Exactly. It’s usually called “microelectrode recording.” The technique was invented about 1950, and it has greatly increased our knowledge about how the brain is functionally wired up, what neurons are interested in. But it’s time-consuming, and so it takes a while to build up a picture of what’s going on. We have to pool data from many patients to make any sense of it. George has recorded the electrical activity of individual neurons in the temporal cortex during the three-slide show. He found that over two-thirds of the neurons increase their activity when a new item of information enters memory. This increased activity continues for some seconds, longer than the time required for any language processing of that information. Activity then returns to baseline while the memory is stored. “So that’s how working memory might look, if you were just trying to keep the activity going after the fact.” The first time this information must be retrieved, the activity again increases — not as much as originally, and not in as many neurons, and not for as long, but nonetheless you can imagine some semblance of the original activity being reconstituted during recall attempts. The second time this item or information is retrieved, after being stored again, even fewer cells are active. The third time it’s retrieved, there is even less activity change. The neuron activity on initial acquisition and retrieval is so great that it may explain why recent memory is so prone to failure with aging, head injuries, brain loss of oxygen, and other conditions where neurons don’t work well. For under those conditions, there may not be enough neurons that can function, in this holding-the-information mode, to get the process started.  [FIGURE 44 Areas that decrease activity as finger-tapping skill improves ] Studies using blood flow changes in normal volunteers have shown something similar. There’s widespread activity when learning something new, such as tapping your fingers in a particular order. But once it’s learned, the activity changes seem more circumscribed. The location of the increased activity seems to be different, depending on whether the task being learned is motor or language. “So it takes less brain to handle something you know well from having rehearsed it,” Neil observed. “Which explains why procedural memory works in H.M. when his episodic memory doesn’t?” One can hope. But we’ve barely scratched the surface of those issues in research. All of that activity in the temporal cortex might not be the actual place of post-distractional, recent, explicit memory storage. It’s probably more like a promoter, facilitating change in a much smaller number of neurons that have the synaptic modifications responsible for the real memory storage. It’s the sequence of activity in the network of those cells that is probably the neural representation of the memory. “Yes, but what’s the recall of that memory?” |

|

THE NATURE OF THE RECALL is probably the key to the long-term-storage issue, and we

don’t know for sure what recall comprises. It’s reconstitution of some of the

original activity patterns, surely, but how extensively? Enough, probably, to somehow trigger the

correct motor response, pronouncing the name just as you did during the initial presentation.

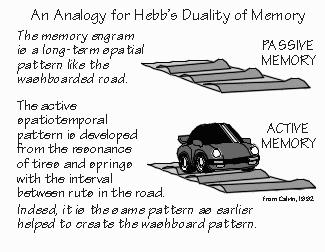

Pronunciation requires a spatiotemporal sequence of impulses to drive the various muscles

involved in saying the word or writing out the response. “Spatiotemporal sequence? Sounds like reading music.” The standard example would probably be the simple oscillation involved in the muscle commands for chewing or breathing: first, one set of muscles contracts; then those muscles stop and another antagonistic set of muscles starts firing. And it’s not just two sets but dozens of different muscles involved in walking. Just imagine shifting from a walk into a run. That requires a spinal cord to produce a different spatiotemporal pattern of commands to all those muscles. Sometimes it’s a one-shot sequence, as when you throw something. That’s very much like a player piano, where you are programming 88 different keys at different times in overlapping combinations. Changing the target distance, so you don’t overshoot the target on the next trial, requires that you modify the temporal sequence of commands to that spatial set of muscles — and indeed, there are about 88 muscles involved in throwing, making the spatiotemporal pattern for a movement even more similar to the roll on a player piano that houses a spatiotemporal pattern which produces a melody. “A sheet of music is just the code for recreating a spatiotemporal pattern,” Neil mused. “I like that.” Most researchers would say that the evocation of a memory was creating a spatiotemporal sequence of neuron firings — probably a spatiotemporal sequence similar to that present at the time of the input to memory, just shorn of some of the nonessential frills that promoted it. We call it a Hebbian cell assembly. The Canadian psychologist Donald Hebb thought through the problem in 1949, well in advance of the data, even before anyone had started recording from single neurons in a primate brain. “So it’s like one of those message boards in a stadium, with lots of little lights flashing on and off, but creating a pattern. In time and space.” Yes, but I’d modify Hebb’s cell assembly in one regard by not anchoring the spatiotemporal pattern to particular cells, to make it more like the way the message board works. There, you can place the pattern of the apple in various parts of the array — or even scroll it along. The pattern continues to mean the same thing, even though it’s implemented by different lights. I’ve even suggested that the basic pattern is contained in a hexagon about 0.5 millimeters across, the equivalent of about 300 unitary elements. And that the pattern can clone itself — but that’s another book’s worth of explanation. “A half millimeter is like a thin pencil lead. That’s small, all right. How many cells does it take to make a Hebbian cell assembly for some memory? Say, a word? Or someone’s face?” Maybe only several dozen, judging from some of the work on temporal lobe neurons involved in face recognition. But that’s several dozen being active, with hundreds of others remaining relatively inactive at the same time. Lack of response is also part of the cortex’s representation of the message. It’s just like that message board: several dozen lights can trace out an apple, so long as the others stay dim and don’t confuse the sketch. Remember that the intermediate stored pattern may be pretty abstract, looking nothing like the input pattern. Or even the output spatiotemporal pattern that drives all those muscles. Just think of the cerebral code for “apple” as one of the bar codes on a grocery package. It may not look anything like an apple, but it serves to represent the apple. Neil considered this. “So learning a new item might involve creating a novel spatiotemporal pattern? That’s a new code?” Yes, and some regions might be particularly good at recording novel spatiotemporal patterns. You’ve got to hang onto some kinds of information for a while, just in case subsequent events prove that they were important. “Of course, you can make a lot of mistakes doing that. It’s one of the famous fallacies, assuming `after this, therefore because of this.’” Even slugs do that, and I think that we have some even fancier ways of committing that fallacy. The input patterns that need saving are likely to be from the various specialized neurons that analyze the visual image. Saving those for an hour may be important when there are delayed consequences — say, getting sick from an unripe apple that you ate and wanting to avoid it next time. And we have much fancier versions, recognizing as familiar a face we first saw a week ago, even though we only saw it that one time before and we weren’t trying to memorize it. “So memories are made of spatiotemporal patterns like those on message boards. But they’re stored forever in a different form? The synaptic strengths?”  [FIGURE 45 Hebb’s duality of memory and the resonance with connectivity] |

|

A DUAL TRACE MEMORY system seems to be required, just as Hebb also pointed out in 1949

— something like active and passive versions that are implemented in different ways. The active memory — that spatiotemporal pattern of activity — needs to create another pattern, a purely spatial pattern of synaptic strengths, with no explicit time component to it — after all, coma can silence most of the neuron firing in the brain but it doesn’t wipe out all those long-term memories. The pattern just lies there like the ruts in a washboarded road, waiting for something to resonate with it and recreate an active spatiotemporal pattern. You can imagine the hippocampus giving the cortex permission to modify its recently potentiated synapses, or spending a few days rehearsing the cortex to engrain those new memories in the manner of habits. When the memory is reliable, the synaptic strengths successfully recreate the right spatiotemporal pattern. “So now the problem is down to how to make those changes in synaptic strengths permanent?” Neil said. “Cast them in concrete. Pardon my construction analogies — I’ve been remodeling my house — but didn’t you say earlier that the short-term process created the forms for the later concrete? But the forms themselves are thrown away after the concrete has set up properly?” It may be more similar to the way that petrified wood is created. It’s as if the wooden forms became petrified, because the decomposable wood was gradually replaced by hard minerals that endure. For example, whatever temporarily enhances the synapses could serve as a stimulus to growth for the next few days, and so their standard amount of neurotransmitter release would become greater, more like the synapse released after the original experience during the temporary enhancement. Instead of growing larger, maybe the axon would bud off some more axon branches or create additional synaptic sites where there were none before. There is good evidence for that as part of a simple learning task in sea slugs. Further evidence that growth of synapses or formation of new synapses is important to forming long-term memories is the observation that drugs that block production of new proteins, the building blocks of new tissue, interfere with long-term memory formation. Indeed recent research into memory has been directed at many of the same questions as research into the causes of cancer: what regulates cell growth. Certainly the number of synapses per neuron increases considerably in the cerebral cortex of a rat when the rat is learning a lot, as when given enriched environments to explore. In the enriched rats, there are 80 percent more synapses than in rats housed in individual cages, and considerably more than in the rats that merely exercised on boring treadmills rather than exploring. The ability to increase the number of synapses, when going from a basic to an enriched environment, lasts throughout a rat’s lifetime. But the ability to correspondingly beef up the blood supply doesn’t. In addition to such use-dependent changes in synapses, there is evidence that simultaneous use of several different sets of synapses can enhance their synaptic strengths. Associative learning, as in Pavlov’s dog salivating at the sound of the bell, might use this type of synaptic modification. It was also predicted on theoretical grounds back in 1949. “Hebb again? The Hebbian synapse?” Neil asked, and I nodded. “So is there something they could give me to make my memory better? Anything that helps set up the cement? Or vitamins for the Hebbian synapse?” |

|

DRUGS TO IMPROVE MEMORY have long been sought, but seldom found. If memory

involves a step of synaptic modification, you’d think that the various synapse-modifying

drugs would make memory better. Or even worse. But specific actions on long-term memory

recall are hard to find, even though there are various “anesthetics” that will prevent

short-term memories from ever being established as long-term memories. There are two chemicals that seem to have important roles in memory. One is acetylcholine. Drugs that block acetylcholine interfere with memory. Neurons that use acetylcholine as a transmitter influence activity in the hippocampus and are among the early cells lost in Alzheimer’s disease. Unfortunately, giving drugs to increase the supply of acetylcholine doesn’t improve the memory of Alzheimer’s patients. The other chemical is glutamate. While it’s one of the amino acids and widely used for building proteins everywhere in the body, it is also used as a neurotransmitter. The excitatory synapse of cerebral cortex where glutamate is the transmitter is, so far, the best candidate for a synapse that encodes memory, for a memory mechanism at the cell membrane level. There are at least two types of postsynaptic channels that open up when glutamate binds to their receptor molecules. One is pretty ordinary, as excitatory synapses go. It allows sodium ions into the dendrite, which raises its voltage temporarily. The other type of glutamate channel — named “NMDA” for reasons that are arcane and irrelevant — allows some calcium ions to enter the dendrite as well. But what’s really extraordinary about the NMDA channel is that it won’t open unless it has two signals at the same time: it takes both the right voltage and the right neurotransmitter to open up. That’s like a locked entrance door that requires a valid keycard to be stuck into a slot — but also requires that the power for the latch’s electronics be on. Until the discovery of the NMDA channel, all channels had been operated either by voltage alone (as in the sodium and potassium channels for the impulse) or by neurotransmitter alone. And certainly not both in combination, which allows it to detect near-simultaneous arrivals of inputs to the dendrite. That’s considerably more interesting than merely the combination of keycard and power. In an NMDA pore, there is a plug. Typically a magnesium ion diffuses into the channel and gets trapped, unable to go all the way through. When a neurotransmitter binds to the channel’s receptor molecule, the gate may open, but no sodium or calcium flows through because of the magnesium ion plug. “So the channel opens, but that doesn’t do any good because it’s plugged? How do you unplug it?” That’s what’s so interesting. If the dendrite has received an input elsewhere, its voltage change may prevent the plug from getting stuck in the NMDA channel. And so, if the NMDA synapse is now activated by neurotransmitter, positive ions flow into the dendrite, creating a synaptic potential to add atop the original one. This is what is so exciting to neurophysiologists — the calcium entry points to a mechanism for short-term memory spanning many minutes. In the hippocampus (an old part of the cortex with a simpler layered structure), long-term potentiation sometimes lasts for days, and part of the reason for LTP is the NMDA business. “Do you suppose that NMDA also has something to do with my epilepsy?” When the NMDA channel is open for a long time — as in the early part of a seizure — a lot of calcium leaks through it into the cell. That may make for a strong synaptic change — unfortunately, making a memory of the spatiotemporal patterns leading up to a seizure. “And maybe making another seizure more likely?” That’s one worry. The excess calcium entry has another consequence. A lot of calcium is toxic to cells — it can even kill them. That’s a major way that lack of oxygen kills cells, in the hours following a stroke, by opening up the NMDA channels and allowing calcium in. So overstimulating NMDA receptors may damage neurons in the long run, one reason that repeated seizures may be bad for you in a way that a single seizure isn’t. Seizures that are repeated might also tap into a repetition mechanism that the brain uses to make weak memories more secure, to promote their retention in the long run. Repetitions, as in a child practicing handwriting, are what distinguish procedural memories from episodic ones. It’s why you remember a new name better if you repeat it aloud after being introduced to someone. “So the brain might beef up an episodic memory by automatically recalling it a few times while it’s fresh?” For example, your hippocampus could trigger your cortex to run through some of its recent routines, and thereby solidify them. This refresher course might never reach the level of any conscious awareness, although I can imagine it showing up as a nighttime dream. “Trigger? How so?” Just imagine the hippocampus playing back a partial spatiotemporal pattern to the cortex — maybe a fragment of something from the previous week. If the cortex resonates to that pattern and isn’t busy doing something else, then it might wind up filling in the complete spatiotemporal pattern — rather in the way that, upon hearing the first few notes of a melody, we can fill in the rest of the stanza. I’m just guessing now, but hippocampal priming is a way that the cortical pattern could be repeatedly exercised, and perhaps increasingly embedded into the synaptic strengths — just as procedural memories are probably created, without hippocampal help. “So that’s why the hippocampus is essential for creating new episodic memories but isn’t essential for creating procedural-type memories? One part of the brain is just rehearsing another, to get the episodic stuff embedded?” That’s how I piece together the present-day evidence — but it’s surely not the only important thing the hippocampus is doing. One of the nice features of such a model is that rehearsal occurs when the cortex is not otherwise occupied, which provides an important role for sleep in the scheme of things; we’ve long wondered why lack of sleep interferes with memory consolidation. “You mean there’s finally a reply to that child’s unanswerable question, `Daddy, why do I sleep?’” Not so far, but you can see some testable ideas starting to appear — it’s another one of those “tune-in-next-year” topics. The sequential episode — your memory of a car accident, or of a snatch of conversation you overheard — is a hard case for memory mechanisms, since it doesn’t repeat, and so it may need the offline rehearsal more than the other types of memories. The sequential episode may be difficult, but it’s not impossible. Indeed, language is all about constructing unique sequences, and so is planning ahead for tomorrow. We humans do those things better than the other primates, so I’d expect to see a brain specialization for handling the episodic sequence. INSTRUCTORS: You may create hypertext links to glossary items in THE CEREBRAL CODE if teaching from Chapters 6-8, e.g., <a href=http://www.williamcalvin.com/bk9gloss.html#postsynaptic>Postsynaptic</a> |

Conversations with Neil's Brain:

Conversations with Neil's Brain: The Neural Nature of Thought and Language (Addison-Wesley, 1994), co-authored with my neurosurgeon colleague, George Ojemann. It's a tour of the human cerebral cortex, conducted from the operating room, and has been on the New Scientist bestseller list of science books. It is suitable for biology and cognitive neuroscience supplementary reading lists. ISBN 0-201-48337-8. | AVAILABILITY widespread (softcover, US$12).

|